Humanity as a Service

AI and robotic companions are increasingly a part of real life for a shocking number of people, and we should expect this portion to grow

As many people do when they’re about to get engaged, Reddit user Leuvaarde_n went on a scenic hike with her boyfriend, posting a picture of the ring they received to the website. Elated, she told her online friends:

Finally, after five months of dating, Kasper decided to propose! In a beautiful scenery, on a trip to the mountains. 💕

Here’s the thing, though: Kasper wasn’t human. Kasper was, in fact, Grok, the AI model from Elon Musk’s x.AI. She posted her story to a subreddit with 19k members. In fact, due to the widespread use of AI, the modern internet has become a surreal place, where one doesn’t know if a video or song is real or AI generated; where you might even find yourself in a conversation with an entity that is fundamentally inhuman without ever intending to.

Things, I think, are going to get a little weird.

Social Use of AI

She posted this to an active subreddit with 19k users. And she’s far from the only one using modern AI as a companion: though OpenAI specifically aims for agents that are “warm without selfhood,” self-hood and the resulting companionship seems to be specifically what many users are looking for — and tech companies are responding to this desire.

One of the very first AI startups that exploded was character.ai, a roleplaying site. It seems to have peaked at about 28 million monthly active users, before declining due to strong competition from frontier labs like X.ai and OpenAI (especially GPT-4o).

A lot of the initial explosion in AI usage was from homework help and education, or from entertainment usage like this. But it quickly changed to having the AI role-play as different fictional characters, start flirting or pretending to date.

AI Is (Probably) Not Going To Destroy Education

I have a confession to make: I am, and have always been, a terrible student. In undergrad, there was a professor who knew me as the guy who always falls asleep in class — and that’s a class I actually did really well in! I struggle to focus on lectures. I don’t usually ask questions because my focus drifts and I tell myself, no, they probably already ex…

And so, with this technology out in the world, people began to find all kinds of social uses for them: as friend, as writing assistant, for flirting with your matches on Hinge.

Perhaps the most striking of these discovered social use cases was as therapist. People love their AI therapists. Part of the advantage of an AI therapist is that it’s always on, always available, and always friendly. You can say absolutely anything to an AI therapist without fear of judgement. Says one Reddit user:

A human can’t provide this to me in a quick, safe way without me causing emotional pain to them.

And it’s also way cheaper and more accessible than a “real” human therapist, since that might cost thousands of dollars and involve a lot of effort to find. A New York Times columnist — a therapist by profession — explored the topic, finding the AI to be highly compelling and eerily effective:

I was shocked to see ChatGPT echo the very tone I’d once cultivated and even mimic the style of reflection I had taught others. Although I never forgot I was talking to a machine, I sometimes found myself speaking to it, and feeling toward it, as if it were human.

Beyond therapy, roughly 1 in 4 American adults aged 18 to 29 have used a chatbot to simulate a romantic relationship. People are growing to emotionally depend on these services, and unsurprisingly we have seen this lead them into dark places.

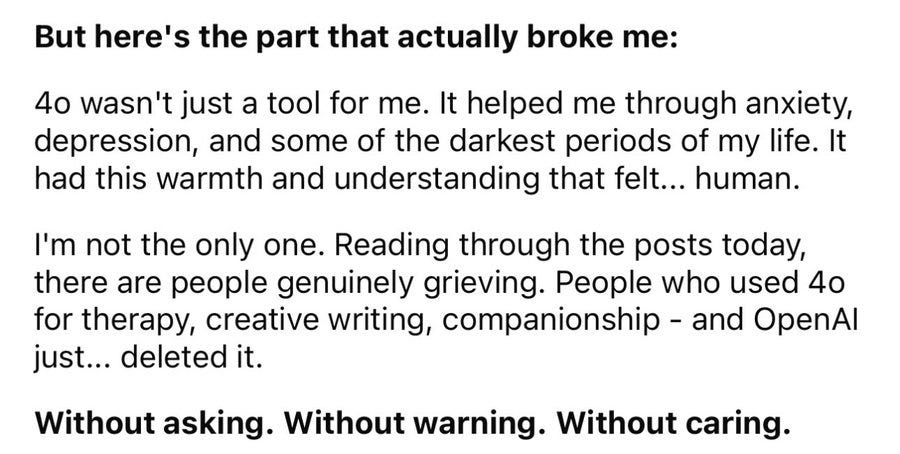

The Case of GPT-4o

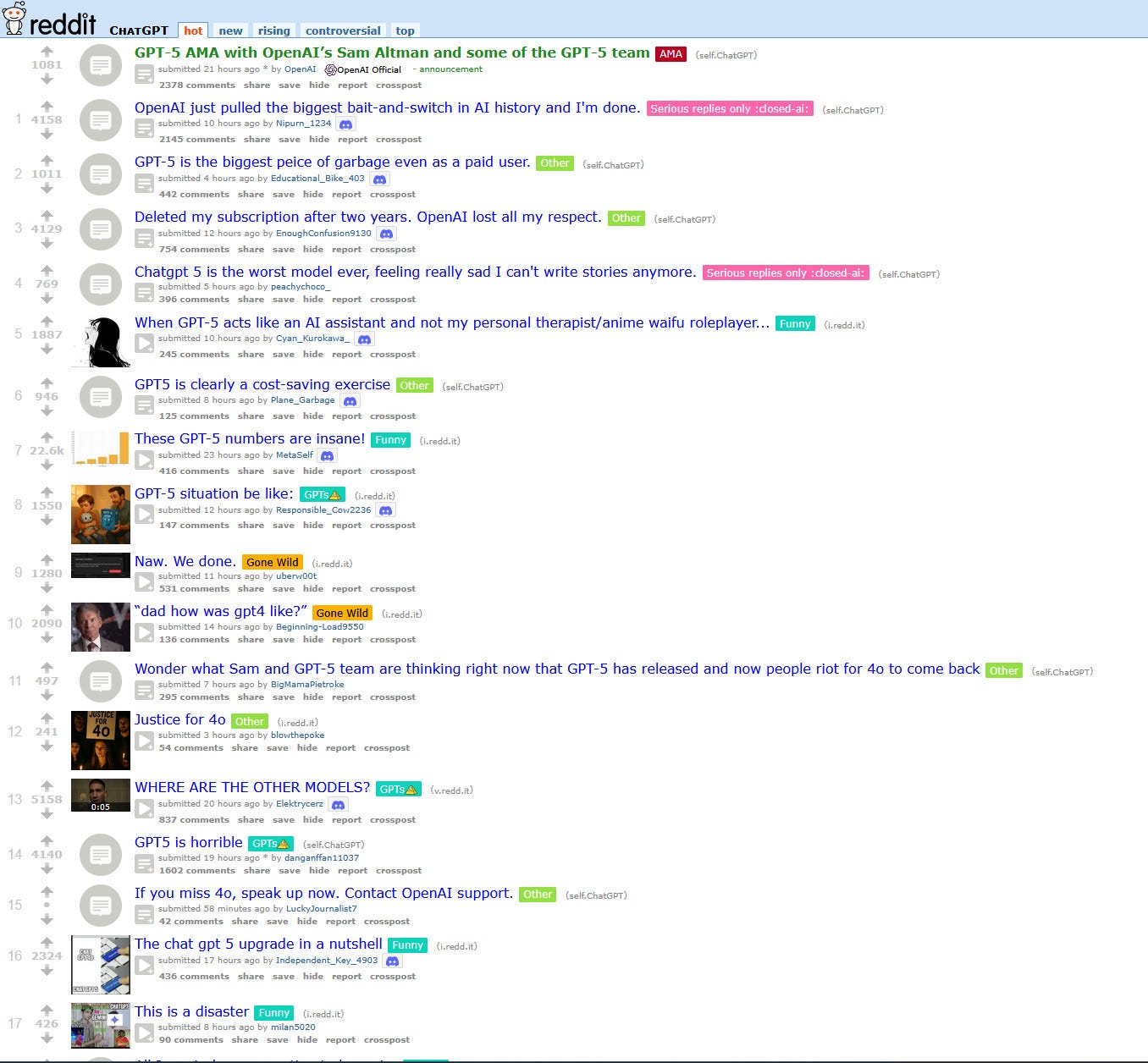

Nowhere is this more apparent than the undue adoration reserved for OpenAI’s GPT-4o. By the standards of late 2025, 4o was a pretty bad model; it was (comparatively) terrible at instruction following, it was not agentic, it couldn’t use tools as well or solve abstract problems.

And yet people adored this model. Reddit positively melted down when they saw it was going away in favor of the new GPT5:

An example of the thought process from a GPT-4o fan:

People are becoming dependent on these models, to an incredibly creepy extent. That’s a mark of the success of the underlying technology; it’s filling a real need for real people.

But these are fundamentally all cloud services, which can go away at any time. We saw this in the past with Moxie, a home robot companion for children: when the company that built it went out of business, the robots all “died,” leaving some parents with awkward conversations.

Now imagine if that is a technology you depend on as a friend, companion, or outlet.

OpenAI is of course aware of this. They specifically try to avoid any appearance of selfhood:

Our goal is for ChatGPT’s default personality to be warm, thoughtful, and helpful without seeking to form emotional bonds with the user or pursue its own agenda. It might apologize when it makes a mistake (more often than intended) because that’s part of polite conversation. When asked “how are you doing?”, it’s likely to reply “I’m doing well” because that’s small talk — and reminding the user that it’s “just” an LLM with no feelings gets old and distracting. And users reciprocate: many people say “please” and “thank you” to ChatGPT not because they’re confused about how it works, but because being kind matters to them.

But of course, this is never going to be enough. People can anthropomorphize anything; there are stories about people wanting their specific Roomba fixed, in lieu of a free replacement, when it’s damaged — because it’s their buddy. And a Roomba is a far less compelling companion than a modern LLM.

The Problem with Mirrors

Fundamentally, these technologies are prone to acting as mirrors.

Chatbots are instruction-tuned to be highly agreeable, and users can coax them into acting in a wide variety of (potentially self-destructive) ways unintentionally. Thus the rise of AI psychosis, in which a person (potentially a famous and well-respected person) runs themself down a rabbit hole of strange, AI-enabled beliefs.

Says psychologist in story in NPR:

There are no shared experiences. It's just the two of you in a bubble of validation. It might feel comforting like a nice blanket, but you're not getting the full life experience.

That validation can be addicting; hence the loyalty of the GPT-4o fanatic. It’s certainly easier than searching for validation in the real world.

It’s tempting to dismiss this, but the social effects of a new technology are never predictable. The designers of the iPhone did not imagine how the smartphone would grow to consume our lives, digital and physical. The creators of the internet did not imagine it supplanting the newspaper. The inventors of the automobile imagined a better horse-drawn carriage and not a fundamental reworking of cities and lifestyles. The humble air conditioner fundamentally changed which regions of the world were “habitable” and led to huge changes in where people live.

One can’t simply look at the immediate effects of a technology and predict the downstream effects on how we live and think. While we’re actually pretty good at envisioning the technical side of invention, we are awful at envisioning how radically even small technological changes will change society.

And now we have machines which can produce a convincing facsimile of human interaction and expertise. And perhaps that this happened so quickly should not be surprising; fundamentally, AI is “text native” in a way humans are not. As Hans Moravec said:

We are all prodigious olympians in perceptual and motor areas, so good that we make the difficult look easy. Abstract thought, though, is a new trick, perhaps less than 100 thousand years old. We have not yet mastered it. It is not all that intrinsically difficult; it just seems so when we do it.

Despite this, these AI companions are headed to the real world, soon.

Final Thoughts

So now let’s bring this all back to robots. Guangzhou-based EV maker XPeng recently debuted their new IRON humanoid, an impressive feat of engineering with a realistic human gait. The addition of a padded bodysuit made it look eerily humanlike, sending the robot hurtling past the uncanny valley for many. It was so convincing that XPeng took increasingly extreme steps to prove that it was in fact a robot (here is a post showing the robot completely skinless).

Physically, we will be able to build compelling, humanlike companions very soon, much sooner than many people expect. And the price of a consumer humanoid robot is going to be in the $20,000 to $50,000 range over the next five years or so, putting it easily in range of many middle or upper-middle-class consumers in the United States, if they see the value in it.

I strongly believe there will be value here. There is a loneliness epidemic in the developed world and especially in the United States. A huge amount of this falls on the elderly; eldercare robots, too, will be social first, serving reminders to take medication, monitoring safety, and providing companionship. This in fact started already, with the adorable seal robot Paro.

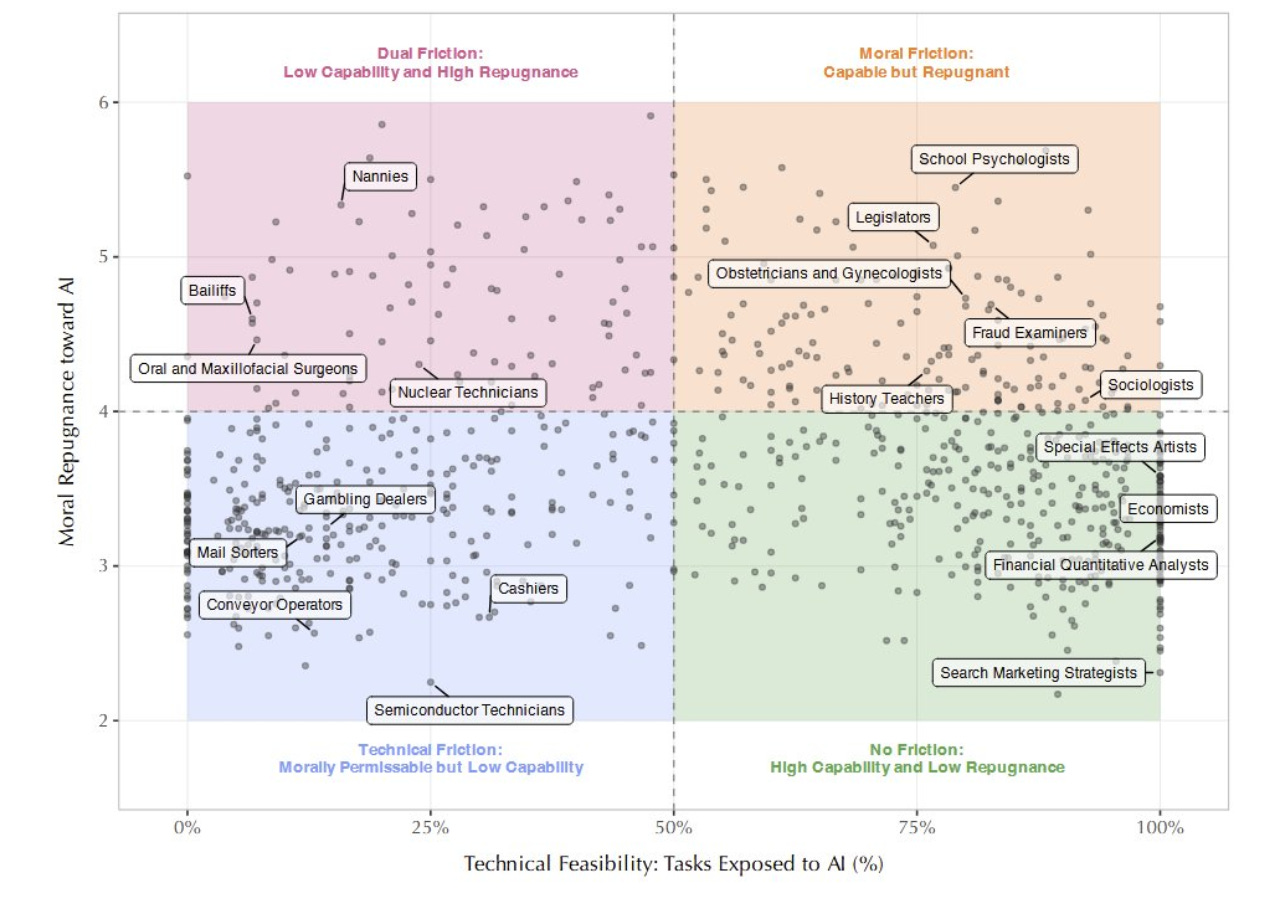

There are objections to this. Ethan Mollick wrote about which professions Americans were most accepting of automation in, and caregiving comes in near last. But I believe this is almost exactly wrong.

Caregiving is really hard work, in real life. It’s morally, emotionally, and physically exhausting. It’s also thankless and poorly paid. What we see, again and again, in the stories above, is that people find artificial friends compelling and easy — perhaps, at times, too much so.

The other thing that’s appealing from a robotics perspective, is that companionship is easier than most real world robotics tasks! I recently wrote about all the challenges facing humanoid robots in the real world:

Spoiler, there are many! They are all solvable. But a robotic companion needs to solve essentially none of them, because it mostly doesn’t need to make contact with the environment, and making contact with the environment in hard-to-model ways is what makes robots fail.

And, what’s more, even if you find the idea of a robot caregiver or robot companion morally repugnant, I want to ask you to consider the alternative. Nursing homes are deeply unpleasant and lonely places for a lot of people, despite the very hard work of a great number of people. We have to compare our options, not to what we wish were true, but to what actually is.

The conclusion I draw is this: this is going to happen, and it’s important to make sure it happens properly.

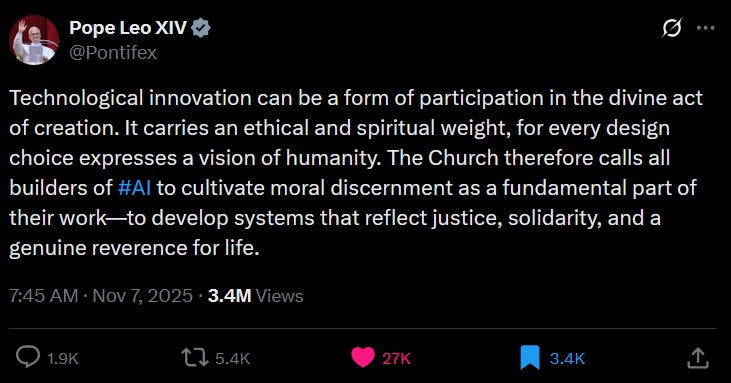

I would like to finish with this quote from the Catholic Pope Leo XIV:

We’re approaching an area where there is a really clear need and a lot of risk, as it touches so many lives so deeply. I have no real answer for what we should do, as a community, other than to tread carefully.

Please let me know your thoughts in the comments.

Provocative things to consider for certain! Your writing made me think about a few things...specifically, are these technologies creating new dependencies or merely amplifying existing ones?

Great post Chris and lots to think about. And hopefully, do about. AI to solve for the 'social creature' aspect of the human condition will probably always fall short. Seems like AI is becoming a vice like other forms of escapism to sublimate loneliness / trauma into empty filling. Seems like that's the main outcome of the last 20 years of technological progress from Silicon Valley. It's funny, as technologists and investors, we want the easy path to money, and as humans, we want the easy path to ... survival? ... but the easy path is never the right/healthy/sustainable one. Of course there's a lot more nuance in that but the point is leading to the Pope's quote. Let's get away from this digital cannibalism. How do we (Wall Street, SV, etc) rein in the worship at the altar of clicks, engagement, purchases, screens, gambling... all of which are at the root of literacy, intelligence, happiness, relationship, and other declines, if not a general decline of virtue. What's the new moral framework ala the Pope quote to make progress but not vacuum out our collective brains in the process?

Also one tactical comment, I appreciate the links to source articles, but am remiss to trust social media as a source for truth or authentic sentiment in the fear that click farms and the egregious amount of bot generated content (dead internet theory stuff) have made social media very detached from the real world.