Automating the Kill Chain

Some new developments in military robotics and artificial intelligence

Let me start with a vision of a potential future:

You wake up with a jolt in your foxhole, a little dug-out somewhere in the no-man’s land between two armies. A light is flashing on your beat-up tablet.

Maybe you hope for a bit that it’s a message from home, or even from command -- it’s been weeks since you’ve seen any other humans on your side of the war. Maybe there aren’t many of you left.

It’s not, though. It’s the enemy, moving through the trees a few dozen kilometers away, spotted by a little drone the size of your hand. At your command, a small army flies into action: the rotors of bomber-drones whir to life, the rolling autonomous gun-platforms taking up strategic positions in cover and to cut off any chance of escape. Robot dogs carrying guns sweep into nearby buildings, scuttling up bombed-out stairwells and setting up lines of fire. Anti-personnel drones loaded with explosives swarm through the trees.

Kill them all, you say, and your robotic troops spring into action. They know exactly what to do, coordinated by an artificial intelligence with superhuman ability to coordinate disparate data sources and make decisions.

You lie down and close your eyes. When you wake back up, the enemy are all dead.

In the battlefields of eastern Ukraine, drones are increasingly becoming autonomous. When given a target — a Russian soldier or vehicle — they will “lock on” and use their on-board artificial intelligence to pursue and kill that target. At the same time, defense startups have begun building combat-ready robots with increasing levels of autonomy.

In addition, the whole business of fighting a war seems liable for automation. The kill chain — the sequence of information-gathering, decision making, and action-taking that leads to deploying lethal force — is well suited for artificial intelligence.

Let’s talk about how and why.

What is the Kill Chain?

In a war, soldiers do not cleanly agree to meet in a small area and shoot each other, as in a video game. Instead, enemies must be found; they must be identified; assets must be allocated to engage them; and then finally munitions must be used to destroy them. This is the “kill chain,” and it stretches from generals in Kyiv down to the men in the trenches on the front lines of the Ukraine war, or from US Navy admirals down to the sailors who fire missiles at boats in the Caribbean.

Large parts of this, especially in a modern military environment, look a lot like the sort of data analysis tasks for which AI is particularly suited. AI models like Anthropic’s Claude can analyze the massive amount of data produced by a modern military, identify trends, and respond faster and — one hopes — more accurately than human analysts can.

This has led the US military to use Claude for military operations, like the US strike on Venezuela that led to the capture of President Maduro. It’s also led to conflict this week between the Pentagon and Anthropic, as Anthropic has a “red line”: Claude cannot be used to make decisions to kill people on its own.

AI Agents for Combat

Deploying drone swarms will require autonomy. These simply cannot be remotely piloted in meaningful numbers, as right now, for the common FPV drones that inflict so many casualties in Ukraine, you need a single pilot per vehicle.

All of this will change. As autonomy gets better, it will be possible for one person to manage multiple drones, say in order to strike a position multiple times in rapid succession to pin them down or hit enemies attempting to reposition. One pilot will manage three drones, then ten, then a hundred.

And it won’t stop with drones. Some of these robots will be armed ground vehicles. We’ve seen recently companies like Tencore pitching their ground robots as part of combined forces which escort and work with humans on the ground, protecting them from aerial drones. Countering this threat will require superhuman reaction times, and likely push these systems to have more and more decision making authority on their own.

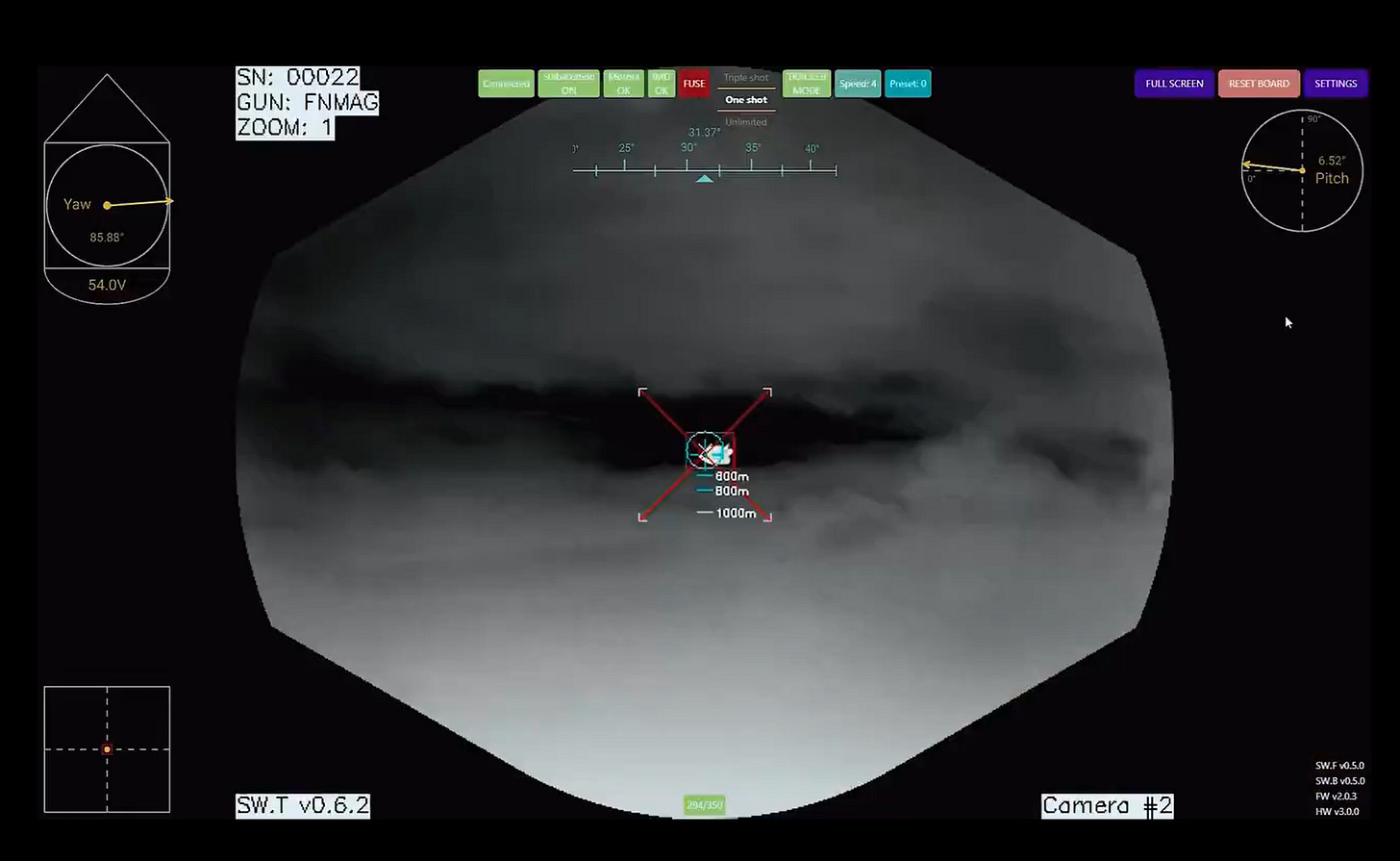

One of the clearest visions came from Scout AI co-founder and CEO Colby Adcock, who proudly announced the Fury Advanced Vehicle Orchestrator in an X post. This is an agentic system which can take a natural-language command and turn it into action. In their demo, they show a human commander giving a high-level command, and then AI agents orchestrating a response: finding, fixing, and destroying a target vehicle using a combination of ground and aerial robots.

To understand what’s different about Scout’s approach with Fury, you also need to understand that it’s using a fundamentally different method than military robots in the past, instead being based on a family of Vision-Language-Action foundation models.

These models can consume video feeds and sensory data from different robots, and aggregate them to build a single unified picture of a battlefield with which to make decisions. The commander says “find and destroy the red vehicle,” and the robots execute: they gather information, process it, and complete the mission.

Final Thoughts

For now, these robots are still semi-autonomous at most. The majority of combat robots in Ukraine are under full human control: they are remotely operated, their purpose is to keep a human soldier out of the line of fire while he does his job. The semi-autonomous drones in Ukraine right now are not terribly intelligent; they’re still not much smarter than a guided missile. The robot ground vehicles, as I’ve written about before, are largely holding trench lines and conducting ambushes. It will be a long journey before Fury is much more than a demo video for a VC pitch deck.

Besides, to an extent, autonomous weapons have always been with us. A land or sea mine is a crude version of an autonomous weapon, wired to blow when an enemy (or anyone else) makes contact with it.

Mines are also an example of the realistic failings of such systems: they make mistakes. A lot of mistakes. People will die for decades after a conflict ends due to mines or encounters with previously-unexploded munitions. Perhaps autonomous robots will be the same; perhaps when demolishing some derelict building ten years after the end of the Ukraine war, a robot sentry will activate and gun down a handful of workers.

There are other concerns, too. From Dario Amodei:

Of course, these weapons also have legitimate uses in the defense of democracy: they have been key to defending Ukraine and would likely be key to defending Taiwan. But they are a dangerous weapon to wield: we should worry about them in the hands of autocracies, but also worry that because they are so powerful, with so little accountability, there is a greatly increased risk of democratic governments turning them against their own people to seize power.

Autonomous military forces will require good governance, keeping them accountable and strictly under the control of civilian authorities. AI agents for military operations radically increase “AI risk.”

There are positives to these systems, too, of course — at least distant, potential positives. By taking human warfighters out of the direct line-of-fire, they could save lives on both sides: robotic systems are more disposable, after all. If a situation is ambiguous, it’s much more reasonable to lose a few $300,000 robot ground vehicles than a few of your human soldiers, so maybe you give the order to hold fire and wait for more information. These systems can also make decisions faster, using more information, and will perhaps one day be able to make those decisions better and with less error, matching the trends we have seen in other parts of society.

This is a uniquely strange and risky area for robotics, though. I write this mostly because I think it’s important everyone understand that this is here, and it is happening today.

Please like, share, and let me know what you think below.

Good overview of the technical trajectory. One dimension I think deserves more attention is the political economy of war rather than just the mechanics. The piece rightly notes that robotic systems could save lives by keeping humans out of the line of fire, but that same dynamic cuts the other way.

Democratic accountability over the use of force has historically relied on a brutal feedback mechanism: body bags come home, voters get angry, wars become politically unsustainable. Remove that, and you don't just change how wars are fought, you change when and how often they start.

The real risk of autonomous military systems isn't that they become uncontrollable on the battlefield. It's that they make war cheap enough (politically) that the threshold for using force drops dramatically. Wasted defense spending generates op-eds; flag-draped coffins end presidencies. A leader who can project force without risking real domestic opposition faces a fundamentally different political calculus, and history suggests that calculus will resolve toward more frequent use of force, not less.

So the question isn't just whether a human stays in the kill chain for ethical or legal reasons, it's whether keeping humans at risk in warfare is, perversely, one of the strongest constraints we have on the decision to go to war in the first place.

Thanks for raising this important issue. I've just written a brief summary about the kill chain, US bombing a school in IRan and artificial stupidity, in my latest post https://robotsandstartups.substack.com/p/the-known-unknowns-of-up-and-to-the?r=3k8pj&utm_campaign=post&utm_medium=web&showWelcomeOnShare=true

Which was inspired by reading Kill Chain from Artificial Bureaucracy and a few others. https://artificialbureaucracy.substack.com/p/kill-chain?lli=1&utm_source=%2Finbox&utm_medium=reader2