Building a Home Robot With Zero Robot Data

Sunday Raises $165 Million Series B

Data-first home robot company Sunday Robotics announced their series B raise for a unique and contrarian take on how to deploy in-home robots today. Their approach claims zero robot data, but still focuses on real-world-data only. This shouldn’t be a huge surprise; co-founder Cheng Chi was behind the game-changing Universal Manipulation Interface (UMI) paper. The idea is to use a tool, an exact duplicate of which is mounted on the robot arm, to collect all the robot data.

A short blog post here with some thoughts on what makes this company and their vision of how to build robotics unique. This is a “quick reaction” blog post, so apologies for any errors in advance. If you like my writing please like and subscribe.

Why Zero Robot Data

Part of what makes the Sunday vision of robotics unique is their focus on glove-style data collection. Robot data is expensive, as their head of product says on X.

To collect real-world data, you need:

Skilled operators

Real robots, at real-robot prices

Good teleoperation setups, which are often clones of the whole robot

To be able to move this around into different environments to collect data

All of this is rather expensive. With tools, assuming this data is as good as real robot data, all you need is the first part - the skilled operators. This is something Sunday does; by skipping the latter three requirements, they can jump directly to paying for high-quality data at scale.

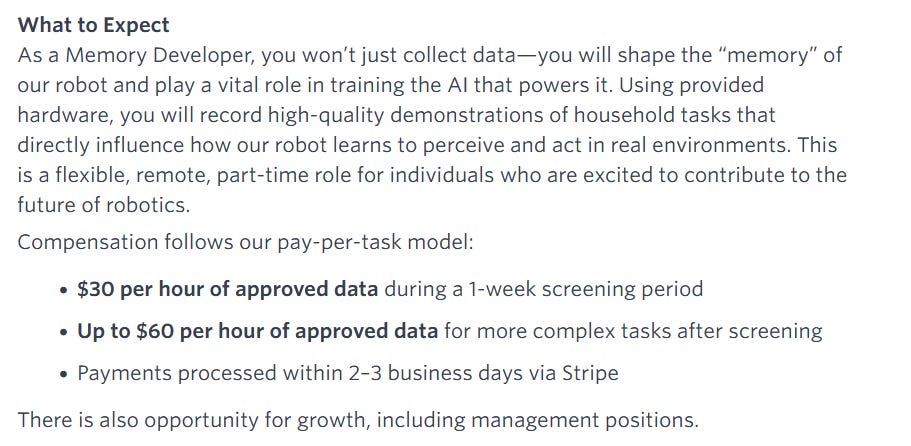

And Sunday pays very well for data, up to $60 per hour according to public job postings — likely more for other positions and for specific customer requirements.

The fact that tools are easier to carry around is also important. The key to scaling robotics learning is data diversity, something I have written about before. Tools let you scale data easily, because you can just walk around and use the tools for anything you want. There are a few Robopapers episodes with a similar premise you can listen to, if interested in this line of reasoning.

Despite how we’ve repeatedly seen that diverse scenes and tasks are important, it remains very hard to get a good distribution of data just with teleoperation data. While other approaches exist — like large-scale human video data — all of these approaches have tradeoffs. My (boring) personal take is that we’ll probably use many different data sources together, but I like to see that Sunday is committing to an extreme to see how far it can take them.

Rapid Hardware Iteration

So, how does this work in practice?

The Sunday Robotics approach seems to have centered on fast hardware iteration. Above you can see some images — shared on X — of how they iterated on the gripper. It’s a unique 3 finger design, a “mitt” which can be exactly mounted on the end of the robot. In addition, data collectors wear a “hat”, similar to the baseball hat worn by their robot, Memo, which captures wide field-of-view RGB camera images.

Their final glove is around $400, which means they can afford to have a distributed network of roughly 500 operators collecting high-quality, diverse data at massive scale.

In public comments, the company has discussed how much time and effort was spent just on data collection - they spent much of their time building the tools and collecting data, and comparatively little time training their models.

Finally, they constantly point out the importance of building a full-stack team: that iterating hardware, data, and training as a whole is crucial to deploying real-world general-purpose robots.

Final Thoughts

This is a very data-first version of robotics. They have taken a strong bet that good data at scale is crucial, and that the particular type of data that can be collected with UMI tools is ideal. It’s an interesting bet and a promising one; I’m interested to see how it goes and hope to see Memo out and about in the real world soon.

I’m skeptical, but wish them luck. What they’re doing isn’t unique. If it works, anyone else can quickly copy them. Also self driving is still struggling with a data only end to end approach if Tesla deployments are anything to go by.

Nice data collection and business strategy.

I was also wondering if there is a transfer gap. A human hand in a glove and a robot gripper have different contact dynamics, different compliance, and different force profiles. Do you have any information about that?

Their robots are so cute, by the way.